The result of a project by Dadabots, it was created by feeding a 2011 album called Diotima by Krallice into a neural network. The songs were broken down into shorter chunks. The neural network was then asked to predict the next section of the track, before being told whether or not it was correct. This is a standard method for training an AI’s operation. Over three days as many as five million guesses were made as to how a black metal song should sound.

This isn’t the first time an AI has been used in a creative field, with other forms of music being composed, visual artwork, writing stories and small articles and so on. Beyond the question of whether AI-created art can compare to the “human touch” the issue of authorship is front and centre of this debate, as an AI can not yet claim to invent or create something legally. The humans that own or design the system in question are still the key holders to copyright and IP, but ascertaining the exact protocol at this early stage is vital for smooth regulation.

Dadabots’ CJ Carr and Zack Zukowski spoke to the Robotics Law Journal about the project and about AI as a creative force with implications both artistic and legal.

What inspired you to program an AI to create/replicate music?

We saw the potential of deep neural networks when image style transfer was released. It was amazing when we saw photographs transform into impressionist oil paintings. We had been researching ways to model musical style and generate music with a target timbre. It seemed that deep learning might be the tool we were looking for once Wavenets had been able to synthesise human voices in multiple languages.

Can you describe the process of creating this black metal album?

At first, we had a very difficult time getting good sounding audio to generate. We extended the size and complexity of our network until we hit the limits of our GPU memory. Then, we found a middle ground for performance and sound quality. It was a lot of trial and error. As we improved our results, we began to notice that the hazy atmospheric quality of the SampleRNN generated audio lent itself nicely to the lo-fi black metal style. We remade models based on a few different albums. Since we were not conditioning based on musical sections, we had no control over the content of the output. Some models would overfit to learn the details of one part while ignoring the rest of the music in the dataset. This lead us to gradually discover the optimal process after about a dozen attempts.

How long did it take to get it to its final state?

We spent months experimenting with different datasets. Our final model trained in a little over three days. We then had to curate the output by picking the best audio. If everything works as expected, an album can be fully made in about four days.

What sort of feedback have you had on it?

The general feedback is that it is not good, but it succeeds at generalising the atmospheric black metal style. It sounds human! Many people were intrigued by its ability to replicate the guitar tone and screaming vocals. It was given low to mid reviews on the music sites we saw, but we were enthused to be getting any.

Did you need to request permission from the original artist?

We did not ask permission. The focus was on scientific research and we were not selling any of the generated music. However, we did contact the band after finishing it. They were intrigued by the project and had suggestions for us. In an ideal world, researchers and artists will collaborate, but that shouldn't stop people from experimenting with well known music. We have found that using a dataset an audience is familiar with can greatly assist in their ability to intuitively understand what is happening by the process.

Is AI going to be a creative force going forward? And do human creators need to fear for their jobs?

It will become more common and accessible all the time. This doesn't mean that creative professionals will be replaced anytime soon. If designed with people in mind, AI can be used to enhance our creative power.

In this case, the AI was used to recreate existing music, so the issue of authorship does not really come up. At what point can it be said that the AI has created something truly new?

The lines of ownership become blurred when multiple artistic sources are brought into the training dataset and the generated output is a generalised blend of each. This is very similar to how humans learn to be original in a new medium. First learning to imitate the masters and then hybridising styles.

Will your AI be able to generate new music purely on its own at some stage? Is it just a case of feeding it enough data to teach it?

We would need to add more functionality for it to choose its own music to generate. Having access to more kinds of music and a way to condition between artists or styles would allow something that resembles a self evolving musical taste.

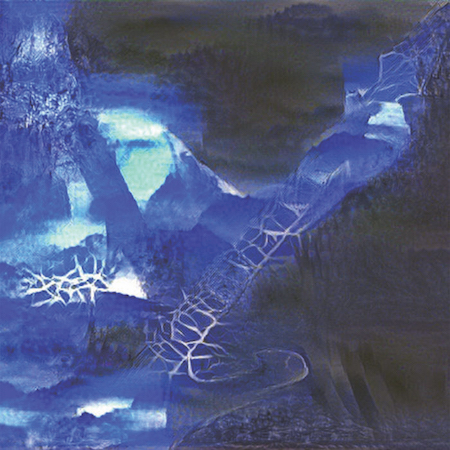

Inorganimate, another musical experiment by Dadabots

At what point can the AI said to be an author?

To call AI the author, it should be able to freely explore a wide variety of styles and have a way to improve based on the reaction to its work via sensory input. In other words, AI would need to pick what music to create on its own. This could be based on audience or critic's feedback.

And when it is credited as such, who would be able to claim the copyright over the work?

It's got to be case by case. It might be the owner of the computer if the code was sold to that user or company. If the code was made public (open-source), it's output might have no copyright.

Do you think it’s a good or bad thing to recognise an AI as a creator?

It's a bad thing.

Do you think there is a reluctance to recognise it as such? If so, why do you think that is?

Machines aren't autonomously creative yet. They are still being told what is good and what to learn by humans. The vision of designing machines as assistants rather than automators was a popular direction discussed by at NIPS this year. A Senior Lecturer at Goldsmiths University, Dr. Rebecca Fiebrink, conducts this type of work on designing human-computer interactions. It seems like a good direction to head for the time being.

Can you envision a future where robot personhood is fully realised and the AI can be credited as the creator and copyright owner?

Maybe in the distant future when we have advancements in hardware and much faster software.

What other projects are you embarking on with AI? What’s next for you?

We have a huge list of future work and research with audio. We are going to continue to put out weekly albums using the models we have already trained, and work with artists to develop their artificial likeness.

Final thoughts

Artists and researchers should be protected by fair use for noncommercial purposes. The ability to share what is becoming possible raises unanswered questions, and is of legitimate concern to the public. It educates. Seeing this work encourages new kinds of people to study the art of machine learning.

What we don’t want is a company making millions purposely imitating artists’ likeness with those artists not getting paid. It seems like exploitation. Copyright law needs to encourage development of the art form. Hearing a favourite artist's style performed by a neural network is not only cool, but it highlights the quality of transformation. Making it apparent how it went from the original to the generated version.

If running a neural network to generate music for commercial purposes, the good faith thing to do is ask permission and work out a royalty split with the original artist.

Then it becomes a collaboration. Both benefit from the promotion. It gets hard to negotiate that split when the dataset combines multiple artists. Maybe 10,000s of artists. It could be difficult to deal with the major record labels on who gets the split.

Is it who owns the songwriting? Is it who owns the master recordings? Is “style” different from “likeness”? Does an artist own their own style? Isn’t style derivative from others’ styles? We legally tolerate bands deriving styles from other bands, at what point do we say this is okay for machine art?

Again, these things might be case by case. It may depend on how well the neural network overfit or generalised, the complexity of the dataset, and how much the original is audible in the output. Maybe creativity and copyright will need to be disconnected to protect the income of professionals in the industry. We will probably see new intellectual rights groups created to solve this issue.

.jpg)

.jpg)

.jpg)

.jpg)

.jpg)